MPLS, or Multiprotocol Label Switching, is a technique that enhances the speed and efficiency of data flow across complex networks. It operates by adding short path labels to network packets, directing them through a predetermined Label-Switched Path (LSP) rather than traditional IP-based routing. These labels contain all the forwarding information, allowing routers to forward packets based on the label rather than performing complex IP lookups. By simplifying the routing decision process, MPLS can reduce latency, optimize network performance, and enable quality-of-service (QoS) features that guarantee certain levels of bandwidth and prioritize critical applications like voice and video.

MPLS is widely used in service provider networks, supporting technologies like VPNs (Virtual Private Networks) and traffic engineering. In a typical MPLS setup, labels are assigned and stripped at the network’s edge, so the core network can process packets quickly without IP overhead. Additionally, MPLS is adaptable to various network protocols and media, enabling seamless interoperability across different types of infrastructure. By allowing network operators to manage traffic dynamically and reroute around congestion or failures, MPLS ensures greater reliability and robustness, making it a preferred choice for large-scale enterprise and ISP networks.

MPLS Traffic Engineering (MPLS-TE) is a technology that enhances the capabilities of MPLS to enable more granular control over traffic flow within a network. This is achieved by manipulating traffic paths to optimize resource usage, avoid congestion, and meet specific service requirements, like bandwidth guarantees or low latency. Here are key methods by which MPLS-TE can manipulate paths and traffic flow:

MPLS-TE Traffic manipulation options

Explicit Routing with Constraint-Based Routing (CBR)

- Constraint-based routing allows MPLS-TE to create Label-Switched Paths (LSPs) that follow a specific path through the network, rather than relying on traditional routing protocols.

- Explicit path setup enables network operators to define exact paths based on link attributes, resource availability, or even administrative preferences, avoiding congested or unreliable links.

- Constraints can include bandwidth, latency, maximum hop count, and available resources.

! Define an explicit path list for the TE tunnel

Router(config)# ip explicit-path name Path_R1_R3

Router(config-ip-expl-path)# next-address 10.1.1.2 ! IP of Router2

Router(config-ip-expl-path)# next-address 10.1.2.2 ! IP of Router3

! Configure the TE Tunnel

Router(config)# interface Tunnel1

Router(config-if)# ip unnumbered Loopback0

Router(config-if)# tunnel mode mpls traffic-eng

Router(config-if)# tunnel destination 10.1.3.3 ! Destination (Router3)

Router(config-if)# tunnel mpls traffic-eng path-option 1 explicit name Path_R1_R3

Router(config-if)# tunnel mpls traffic-eng bandwidth 1000 ! Set bandwidth constraint

Router(config-if)# no shutdownTraffic Engineering Database (TED)

- The TED collects information on the state of the network, such as available bandwidth, link utilization, and link properties.

- MPLS-TE uses the TED to make dynamic routing decisions based on real-time information, thus selecting paths that avoid congested areas and optimize resource use.

! Enable traffic engineering on OSPF

Router(config)# router ospf 1

Router(config-router)# mpls traffic-eng router-id Loopback0

Router(config-router)# mpls traffic-eng area 0

! Ensure interfaces participate in TE

Router(config)# interface GigabitEthernet0/1

Router(config-if)# ip router ospf 1 area 0

Router(config-if)# mpls traffic-eng tunnelsResource Reservation with RSVP-TE

- RSVP-TE (Resource Reservation Protocol with TE extensions) is used to signal and reserve resources along the selected path.

- This protocol sets up traffic-engineered LSPs (TE LSPs) and reserves the necessary bandwidth to meet quality-of-service (QoS) requirements.

- With RSVP-TE, MPLS-TE can ensure certain traffic flows (like voice or video) get dedicated resources, reducing packet loss and jitter.

! Enable RSVP globally

Router(config)# mpls traffic-eng tunnels

Router(config)# ip rsvp signaling hello

! Enable RSVP on each interface used by the MPLS-TE tunnel

Router(config)# interface GigabitEthernet0/1

Router(config-if)# ip rsvp bandwidth 10000 1000 ! Interface bandwidth in kbps, reserved bandwidth

! Configure an MPLS-TE tunnel with RSVP

Router(config)# interface Tunnel2

Router(config-if)# ip unnumbered Loopback0

Router(config-if)# tunnel mode mpls traffic-eng

Router(config-if)# tunnel destination 10.1.3.3

Router(config-if)# tunnel mpls traffic-eng bandwidth 2000

Router(config-if)# tunnel mpls traffic-eng path-option 1 dynamic

Router(config-if)# no shutdownFast Reroute (FRR)

- Fast Reroute enables rapid path switching in case of a link or node failure, ensuring minimal disruption.

- FRR pre-establishes backup LSPs so that traffic can be diverted almost instantaneously in case of an issue on the primary path, enhancing reliability and service continuity.

! Configure fast reroute on the tunnel interface

Router(config)# interface Tunnel2

Router(config-if)# mpls traffic-eng fast-reroute

Router(config-if)# tunnel mpls traffic-eng path-option 1 dynamic

Router(config-if)# no shutdownLoad Balancing and Path Diversity

- MPLS-TE supports load balancing by distributing traffic across multiple LSPs. This is particularly useful for high-traffic routes that need more bandwidth than a single path can provide.

- Path diversity ensures that critical data can be split across multiple paths, reducing the risk of a single point of failure and improving network redundancy.

Router(config)# interface Tunnel3

Router(config-if)# ip unnumbered Loopback0

Router(config-if)# tunnel mode mpls traffic-eng

Router(config-if)# tunnel destination 10.1.3.3

Router(config-if)# tunnel mpls traffic-eng path-option 1 dynamic

Router(config-if)# tunnel mpls traffic-eng path-option 2 explicit name Path_R1_R3

Router(config-if)# no shutdownBandwidth Guarantees and Traffic Prioritization

- MPLS-TE can allocate bandwidth to specific traffic flows, ensuring certain types of traffic, like real-time or high-priority data, meet their QoS requirements.

- Differentiated services (DiffServ) can be implemented within MPLS-TE, allowing traffic prioritization at the LSP level and ensuring high-priority traffic gets preferential treatment.

! Set bandwidth requirement for TE tunnel

Router(config)# interface Tunnel4

Router(config-if)# tunnel mode mpls traffic-eng

Router(config-if)# tunnel destination 10.1.3.3

Router(config-if)# tunnel mpls traffic-eng bandwidth 5000 ! 5000 kbps reserved

Router(config-if)# no shutdownAdministrative Policies and Affinity-Based Routing

- Administrative policies (affinity or coloring) can be used to prefer or avoid certain links based on the type of traffic.

- Affinity or link coloring allows paths to be marked for certain traffic types (e.g., customer A’s traffic can only use certain links), enabling more precise traffic segregation and adherence to SLA requirements.

! Define affinity on an interface (e.g., marking it with color 0x10)

Router(config)# interface GigabitEthernet0/2

Router(config-if)# mpls traffic-eng administrative-weight 0x10

! Set affinity for the tunnel

Router(config)# interface Tunnel5

Router(config-if)# tunnel mode mpls traffic-eng

Router(config-if)# tunnel destination 10.1.3.3

Router(config-if)# tunnel mpls traffic-eng path-option 1 dynamic

Router(config-if)# tunnel mpls traffic-eng attribute-flags affinity 0x10

Router(config-if)# no shutdownDynamic Path Computation with Path Computation Element (PCE)

- The Path Computation Element (PCE) is a centralized network component that dynamically computes paths for MPLS-TE LSPs based on network-wide data.

- PCE enhances scalability and efficiency in large networks by providing real-time, optimized path computation and reducing computational strain on routers.

! Enable PCEP on the router

Router(config)# pce

Router(config-pce)# address ipv4 10.1.4.4

Router(config-pce)# source Loopback0

Router(config-pce)# no shutdown

! Configure the tunnel to use PCE for path computation

Router(config)# interface Tunnel6

Router(config-if)# tunnel mode mpls traffic-eng

Router(config-if)# tunnel destination 10.1.3.3

Router(config-if)# tunnel mpls traffic-eng path-option 1 dynamic pce

Router(config-if)# no shutdown

These examples demonstrate basic configurations for MPLS-TE features. Advanced setups may require customizations based on network architecture, device capabilities, and specific application needs.

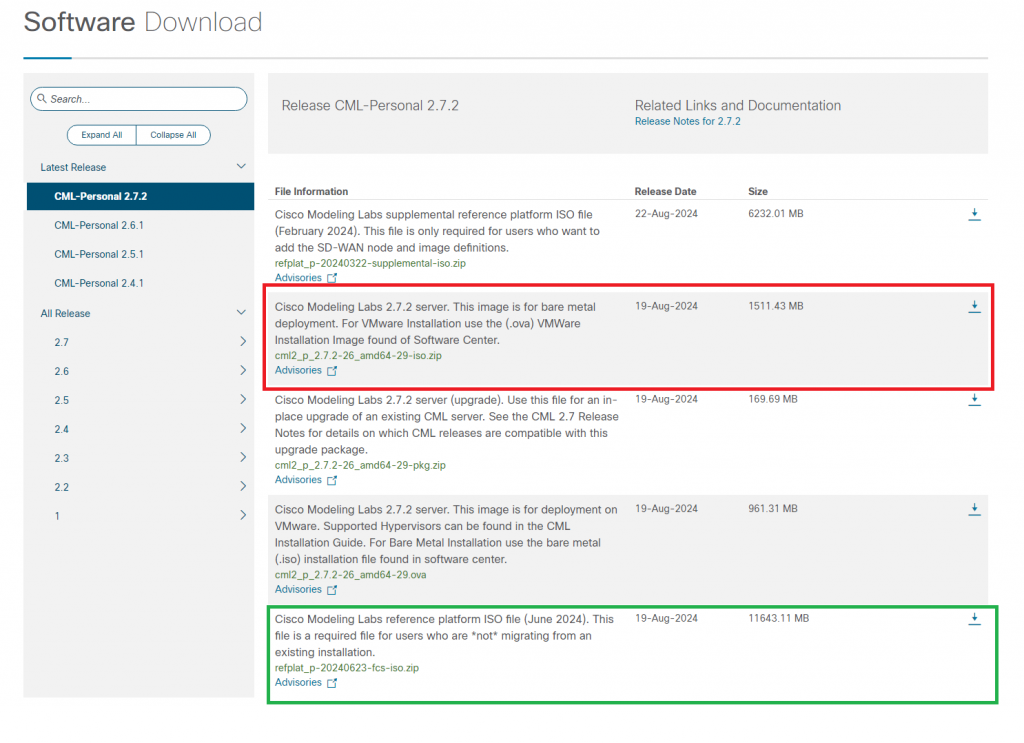

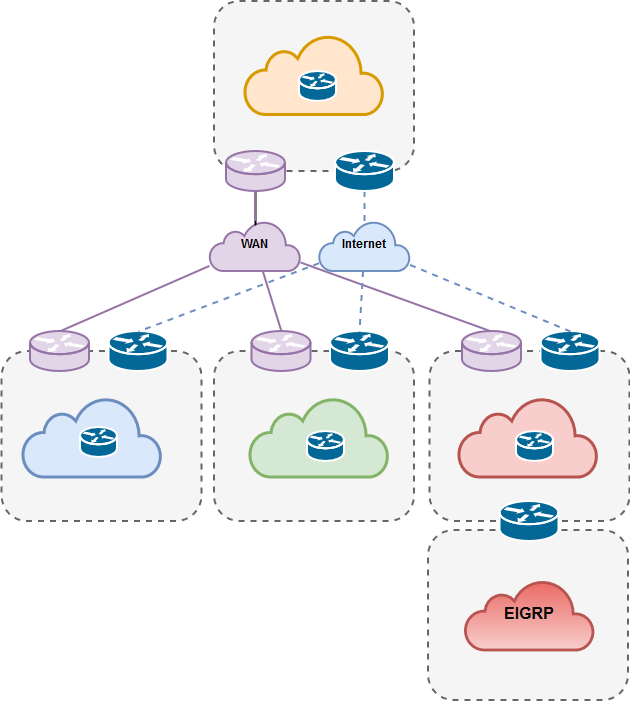

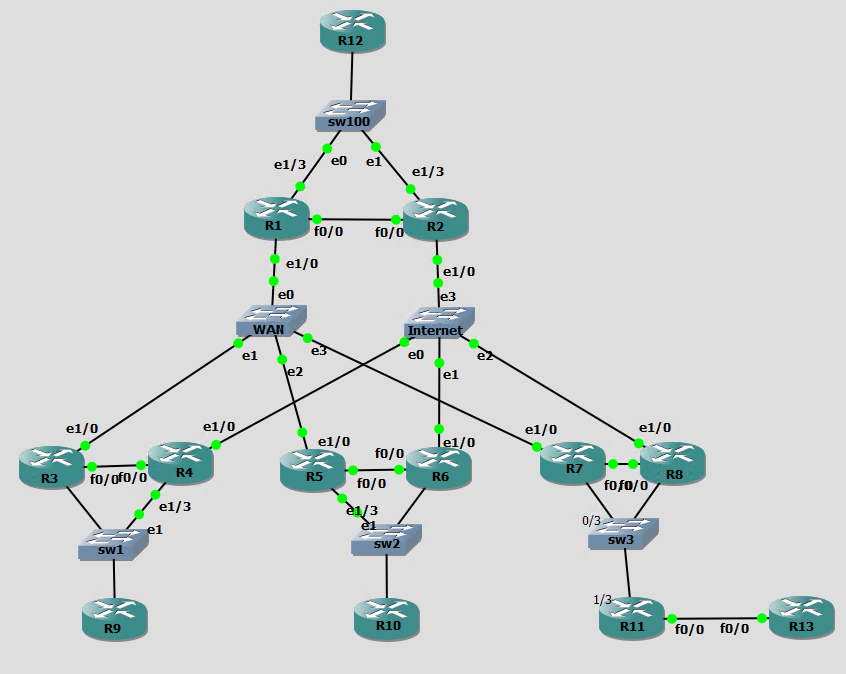

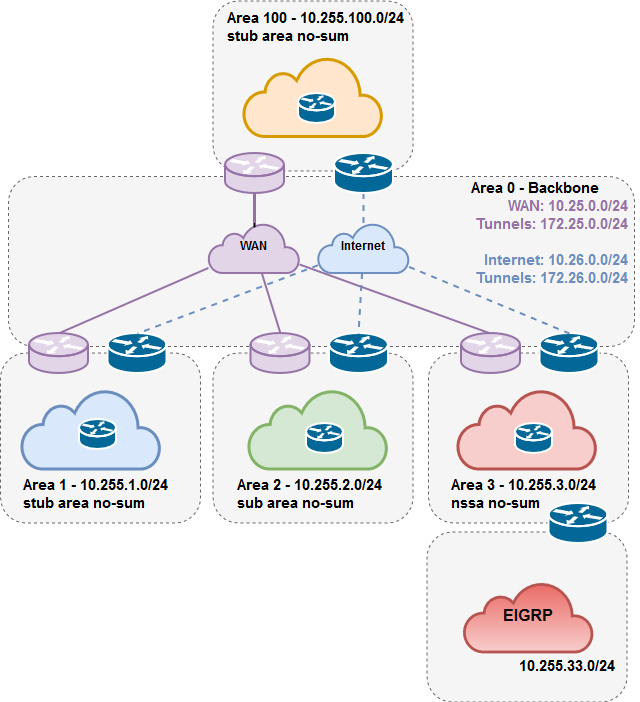

Last weekend I decided to try Cisco’s Modeling Labs (CML). This is Cisco’s network virtualization platform comparable to GNS3 or EVE-NG. It replaced an older Cisco product called VIRL (Virtual Internet Routing Lab), offering more features and improved performance.

Last weekend I decided to try Cisco’s Modeling Labs (CML). This is Cisco’s network virtualization platform comparable to GNS3 or EVE-NG. It replaced an older Cisco product called VIRL (Virtual Internet Routing Lab), offering more features and improved performance.